Windows + WSL2 환경에서 (tensorflow 등의) NVIDIA GPU 인식

예전에 한번 정리했었는데,

파이썬 - tensorflow 2.6 NVidia GPU 사용 방법

; https://www.sysnet.pe.kr/2/0/12816

근래에 새로 구성했더니 ^^; GPU가 인식이 안 됩니다. 검색 결과 이런 문구가 나오는군요, ^^

지금(2025-05-23) pip install로 설치하면 tensorflow==2.19 버전이기 때문에 Windows 환경에서는 GPU를 지원하지 않습니다. 따라서 Windows + Python이라면 테스트 용도로 CPU 버전만 사용해야 합니다.

그래도 그나마 다행인 것은, WSL2 환경에서는 GPU 지원이 가능하다는 점인데요, 이번엔 그 방법을 정리해 보겠습니다.

공식 문서로 시작하는 것이 가장 확실하겠죠? ^^

2. Getting Started with CUDA on WSL 2

; https://docs.nvidia.com/cuda/wsl-user-guide/index.html#getting-started-with-cuda-on-wsl

혹시 예전 GPG 키가 있다면 우선 삭제하고,

$ sudo apt-key del 7fa2af80

OK

그다음 "

download page for WSL-Ubuntu" 링크에서, "Linux" / "x86_64" / "WSL-Ubuntu" "2.0" / "deb (local)"를 선택하면 아래의 내용이 펼쳐져서 나옵니다.

$ wget https://developer.download.nvidia.com/compute/cuda/repos/wsl-ubuntu/x86_64/cuda-wsl-ubuntu.pin

$ sudo mv cuda-wsl-ubuntu.pin /etc/apt/preferences.d/cuda-repository-pin-600

$ wget https://developer.download.nvidia.com/compute/cuda/12.9.0/local_installers/cuda-repo-wsl-ubuntu-12-9-local_12.9.0-1_amd64.deb

$ sudo dpkg -i cuda-repo-wsl-ubuntu-12-9-local_12.9.0-1_amd64.deb

$ sudo cp /var/cuda-repo-wsl-ubuntu-12-9-local/cuda-*-keyring.gpg /usr/share/keyrings/

$ sudo apt-get update

$ sudo apt-get -y install cuda-toolkit-12-9

그냥 ^^ 아무 생각 없이 저대로 차례차례 명령어를 실행하시면 됩니다. (혹시 향후에는 바뀔 수도 있으므로 반드시 저 링크에서 제공하는 스크립트를 사용하시기 바랍니다.)

설치가 완료되면 대충 이런 식으로 확인할 수 있습니다.

$ /usr/local/cuda-12.9/bin/nvcc --version

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2025 NVIDIA Corporation

Built on Wed_Apr__9_19:24:57_PDT_2025

Cuda compilation tools, release 12.9, V12.9.41

Build cuda_12.9.r12.9/compiler.35813241_0

$ which nvidia-smi

/usr/lib/wsl/lib/nvidia-smi

$ nvidia-smi

Wed May 21 14:02:16 2025

+-----------------------------------------------------------------------------------------+

| NVIDIA-SMI 575.51.02 Driver Version: 576.02 CUDA Version: 12.9 |

|-----------------------------------------+------------------------+----------------------+

| GPU Name Persistence-M | Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap | Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|=========================================+========================+======================|

| 0 NVIDIA GeForce RTX 4060 Ti On | 00000000:01:00.0 On | N/A |

| 0% 29C P8 10W / 160W | 4985MiB / 8188MiB | 9% Default |

| | | N/A |

+-----------------------------------------+------------------------+----------------------+

+-----------------------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=========================================================================================|

| No running processes found |

+-----------------------------------------------------------------------------------------+

이제 pip install로 cudnn을 설치하고,

'''

conda create --name pybuild python=3.10 -y

conda activate pybuild

'''

$ python -m pip install nvidia-cudnn-cu12

예제 코드를,

'''

python -m pip install tensorflow

'''

$ cat test.py

import tensorflow as tf

print("Num GPUs Available: ", len(tf.config.list_physical_devices('GPU')))

from tensorflow.python.client import device_lib

print(device_lib.list_local_devices())

실행하면 이런 결과가 나옵니다.

'''

python -m pip install matplotlib

'''

$ python test.py

2025-04-05 14:25:36.487499: E external/local_xla/xla/stream_executor/cuda/cuda_fft.cc:467] Unable to register cuFFT factory: Attempting to register factory for plugin cuFFT when one has already been registered

WARNING: All log messages before absl::InitializeLog() is called are written to STDERR

E0000 00:00:1747805136.595973 99583 cuda_dnn.cc:8579] Unable to register cuDNN factory: Attempting to register factory for plugin cuDNN when one has already been registered

E0000 00:00:1747805136.629660 99583 cuda_blas.cc:1407] Unable to register cuBLAS factory: Attempting to register factory for plugin cuBLAS when one has already been registered

W0000 00:00:1747805136.877554 99583 computation_placer.cc:177] computation placer already registered. Please check linkage and avoid linking the same target more than once.

W0000 00:00:1747805136.877642 99583 computation_placer.cc:177] computation placer already registered. Please check linkage and avoid linking the same target more than once.

W0000 00:00:1747805136.877650 99583 computation_placer.cc:177] computation placer already registered. Please check linkage and avoid linking the same target more than once.

W0000 00:00:1747805136.877653 99583 computation_placer.cc:177] computation placer already registered. Please check linkage and avoid linking the same target more than once.

2025-04-05 14:25:36.899923: I tensorflow/core/platform/cpu_feature_guard.cc:210] This TensorFlow binary is optimized to use available CPU instructions in performance-critical operations.

To enable the following instructions: AVX2 FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags.

Num GPUs Available: 1

I0000 00:00:1747805140.710462 99583 gpu_device.cc:2019] Created device /device:GPU:0 with 5529 MB memory: -> device: 0, name: NVIDIA GeForce RTX 4060 Ti, pci bus id: 0000:01:00.0, compute capability: 8.9

[name: "/device:CPU:0"

device_type: "CPU"

memory_limit: 268435456

locality {

}

incarnation: 1437687304491415990

xla_global_id: -1

, name: "/device:GPU:0"

device_type: "GPU"

memory_limit: 5797576704

locality {

bus_id: 1

links {

}

}

incarnation: 10535418989602502258

physical_device_desc: "device: 0, name: NVIDIA GeForce RTX 4060 Ti, pci bus id: 0000:01:00.0, compute capability: 8.9"

xla_global_id: 416903419

]

초기에 오류 메시지가 나오긴 하는데, 일단 GPU 장치가 인식은 됩니다. 이후

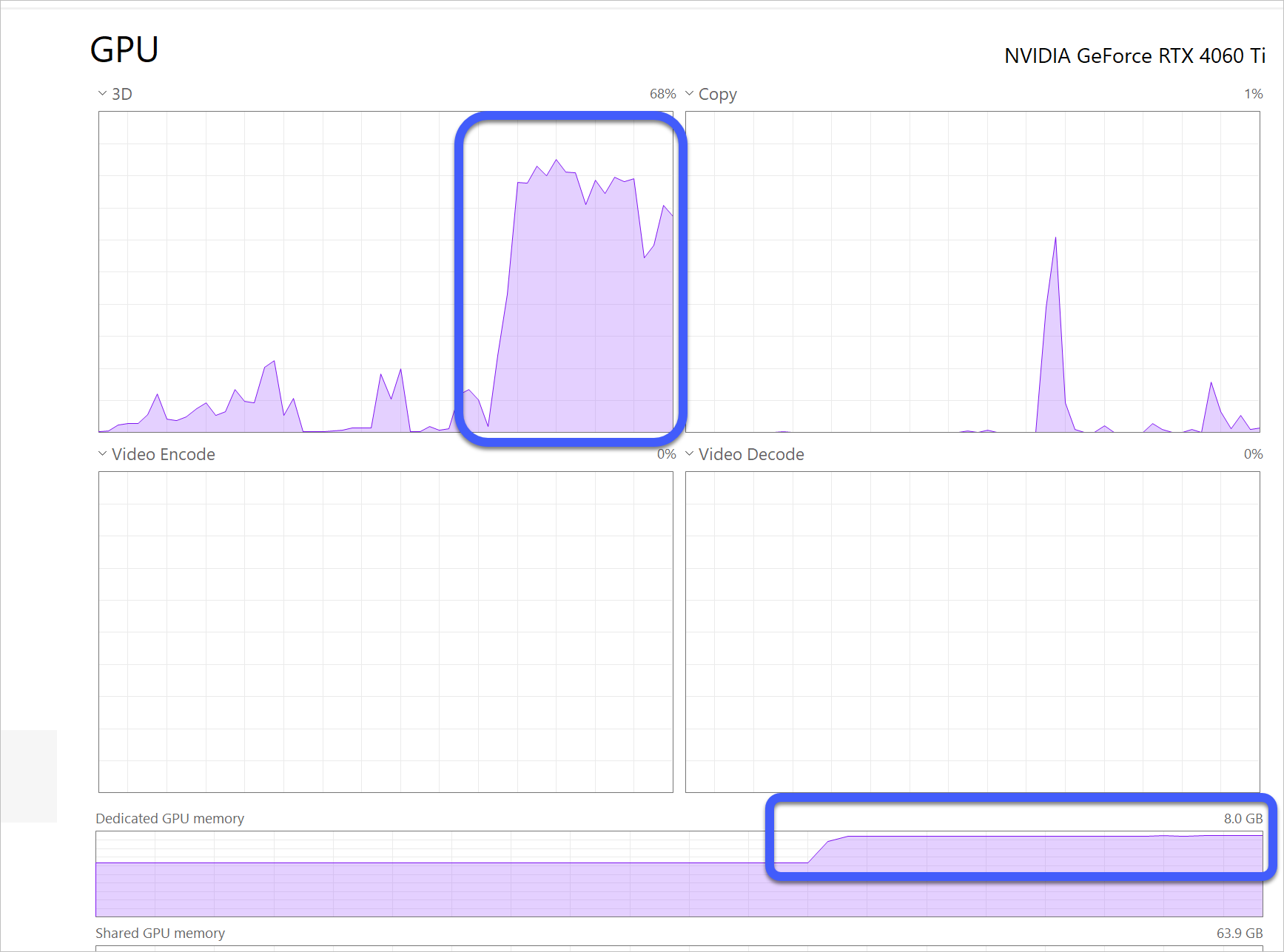

예전에 작성했던 머신 러닝 예제 코드를 돌리면 작업 관리자의 GPU 사용량이 이렇게 올라가는 것을 확인할 수 있습니다.

참고로, cuda toolkit이나 cudnn 없이 예제 코드(list_physical_devices)를 실행하면 이런 결과가 나오는데요,

$ python test.py

2025-04-05 14:14:24.671434: E external/local_xla/xla/stream_executor/cuda/cuda_fft.cc:467] Unable to register cuFFT factory: Attempting to register factory for plugin cuFFT when one has already been registered

WARNING: All log messages before absl::InitializeLog() is called are written to STDERR

E0000 00:00:1747804464.812065 97533 cuda_dnn.cc:8579] Unable to register cuDNN factory: Attempting to register factory for plugin cuDNN when one has already been registered

E0000 00:00:1747804464.849271 97533 cuda_blas.cc:1407] Unable to register cuBLAS factory: Attempting to register factory for plugin cuBLAS when one has already been registered

W0000 00:00:1747804465.141418 97533 computation_placer.cc:177] computation placer already registered. Please check linkage and avoid linking the same target more than once.

W0000 00:00:1747804465.141520 97533 computation_placer.cc:177] computation placer already registered. Please check linkage and avoid linking the same target more than once.

W0000 00:00:1747804465.141526 97533 computation_placer.cc:177] computation placer already registered. Please check linkage and avoid linking the same target more than once.

W0000 00:00:1747804465.141529 97533 computation_placer.cc:177] computation placer already registered. Please check linkage and avoid linking the same target more than once.

2025-04-05 14:14:25.174985: I tensorflow/core/platform/cpu_feature_guard.cc:210] This TensorFlow binary is optimized to use available CPU instructions in performance-critical operations.

To enable the following instructions: AVX2 FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags.

W0000 00:00:1747804469.517589 97533 gpu_device.cc:2341] Cannot dlopen some GPU libraries. Please make sure the missing libraries mentioned above are installed properly if you would like to use GPU. Follow the guide at https://www.tensorflow.org/install/gpu for how to download and setup the required libraries for your platform.

Skipping registering GPU devices...

Num GPUs Available: 0

W0000 00:00:1747804469.522848 97533 gpu_device.cc:2341] Cannot dlopen some GPU libraries. Please make sure the missing libraries mentioned above are installed properly if you would like to use GPU. Follow the guide at https://www.tensorflow.org/install/gpu for how to download and setup the required libraries for your platform.

Skipping registering GPU devices...

[name: "/device:CPU:0"

device_type: "CPU"

memory_limit: 268435456

locality {

}

incarnation: 6212249524767843787

xla_global_id: -1

]

보는 바와 같이 "/device:CPU:0"만 나옵니다.

[이 글에 대해서 여러분들과 의견을 공유하고 싶습니다. 틀리거나 미흡한 부분 또는 의문 사항이 있으시면 언제든 댓글 남겨주십시오.]